By now, Artificial Intelligence (AI) is all the talk. As I travel, I hear faculty with strong views for and against it. What I observe is fear of the unknown. And yet, this moment feels familiar — like we have been here with the dawn of the internet. The difference is that the spiral has moved to the next level. AI is forcing us to rethink how we design learning, how we assess learning, and how we prepare students for a world that is shifting right now.

Log in to view the full article

By now, Artificial Intelligence (AI) is all the talk. As I travel, I hear faculty with strong views for and against it. What I observe is fear of the unknown. And yet, this moment feels familiar — like we have been here with the dawn of the internet. The difference is that the spiral has moved to the next level. AI is forcing us to rethink how we design learning, how we assess learning, and how we prepare students for a world that is shifting right now.

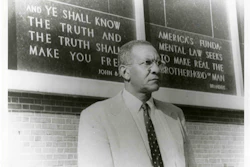

If we are honest, some of our habits in higher education have kept us comfortable. The days of recycling old quizzes and lecture notes, running the same course the same way year after year, are fading fast. AI exposes what is weak: unclear assignments, low-level recall assessments, and teaching that does not require students to explain, justify, or apply ideas. At the same time, AI can strengthen what is good when we use it with intention. Dr. Peter Eley

Dr. Peter Eley

Many universities are rushing to develop AI policies or “playbooks.” Policies can sound strong, but they are often rigid in a landscape that changes every month. A playbook is designed to adjust. Imagine an NFL team’s playbook: it changes weekly based on the opponent. That is the mindset we need — a consistent review cycle, clear expectations, and a feedback loop that tells us what works, what does not, and what needs updating.

For HBCUs, this is not just philosophical; it is a capacity issue. Keeping guidance current requires time, expertise, and infrastructure. Without that, institutions risk rules that are outdated or inconsistent. We need guidance that protects academic integrity and faculty autonomy while also helping students build critical thinking, deductive reasoning, and ethical habits of mind.

The neck-breaking pace of development in both generative AI and agentic AI creates real challenges, especially for HBCUs and liberal arts institutions. Professional development cannot be a one-time workshop and a checklist. We need financially prudent options, and we need to identify early adopters who have the time and desire to retool — then support them so they can support others.

The question is not only “How do we learn AI?” but “How do we consistently evolve with AI?” My recommendation is that we stay principle-based. Sound pedagogy will not be replaced by AI; it will be amplified, and weak practice will be exposed. Strong deductive reasoning is essential for using AI wisely. Remember high school geometry proofs? Many asked, “Why do I need this?” That time has arrived. AI outputs need constraints and verification. Our assignments should require students to show their thinking, name their assumptions, and explain how they verified a result.

Another barrier to access is cost. Many tools provide free access to older models or teaser access. We are also seeing simplified versions bundled into platforms like Microsoft 365 (Copilot) and Google (Gemini). Tools like ChatGPT and Claude often offer flat-fee tiers up to limits, while many video and voice tools use metered credits.

So the question becomes: how do budgets change to provide students and faculty access to learn, explore, and compare models? What happens when students hit usage limits in the middle of a project? For HBCUs and smaller institutions, these limits can quickly become equity issues. If we want students to earn micro-credentials alongside degrees, institutions must decide how costs are covered and how upgrades are managed.

Most of our AI conversation is about being consumers of established tools. Much less is said — especially in HBCU circles — about being developers of AI. I am talking about building generative tools and agentic systems, not only using what others create. This is a critical moment to provide access and a sandbox for our students to learn how to build, test, and improve applications.

Diversity of thought and input are critical so that AI reflects our voices, our skin tones, our culture, and our realities. If we are not at the table, others will interpret the world for us — and that is only if we are included at all.

To move from questions to action, AI alliances are forming. A viable solution for HBCUs to stay current and grow capacity is to join and strengthen AI alliances, including regional efforts like the Alliance for AI Transformation (alliance-4ai.org). These alliances create space to share policy drafts, collaborate on grants, and provide training that would be difficult to sustain alone. There is strength in numbers. If we are willing to work as a collective, we can navigate this new age of AI with clarity and mastery.

Dr. Peter Eley is a professor and interim research director at Alabama A&M University. He is also the founder and host of the Digital HBCU podcast (thedigitalhbcu.com), where he and guests explore how HBCUs can leverage AI, data, and digital innovation to expand access and accelerate institutional impact.

This commentary originally appeared in April 2, 2026 edition of The EDU Ledger.